Music of the Future

Scientists create new technologies that can make any surface — from a desk to a wall — sing.

|

|

People don’t have to go to outer space to make music in new ways. Technologies using computers and sensors are being created that will let people do that right here on Earth.

|

| NASA/JPL |

The musical instruments of the future may be right in front of your eyes and on the tables, walls and windows around you. All it takes to use them is the right hardware, and a little imagination.

In Switzerland, a team of scientists and artists are working together on new technology that can transform almost any surface into a musical instrument. The technology is called MUTE, short for Multi- Touch Everywhere. Using MUTE, a person can use a computer to translate taps on different parts of a table or a wall as different sounds.

For example, you may record and save different sounds on a computer — anything from a snare drum or trumpet to clapping hands or a sneeze. Then, you program your computer to play one of these recorded sound snippets whenever you tap a certain spot on a table top or wall. The left side of a table might play snare drum beats, the right side a melody on a trumpet. If you tap the two sides at the same time, you’ll hear both sounds come together as a song. The system uses a camera and lasers to see where you’ve tapped on the table.

|

|

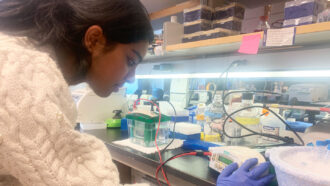

This is the computer program used to assign different sounds to different parts of a surface. Sensors tell the computer when someone taps on one of the colored regions.

|

| Alain Crevoisier / Future Instruments |

What’s more, the programmed surface doesn’t even have to be solid — it can float right in front of you, explains musician and MUTE developer Alain Crevoisier. “It can even work in the air,” he says. “Since the lasers are creating a plane of light, what we actually detect is when you cross this plane with either the hands or sticks or mallets.” Imagine, for example, a virtual piano hovering in front of your face.

When the MUTE system is installed on a surface, it also uses acoustic sensors to track the location of a performer’s tap. (For more information on acoustics, see the sidebar below story, “What is acoustics?”) “When you tap the table you generate vibrations,” says Crevoisier, a researcher at the Music Conservatory of Geneva. The vibration travels through the surface as an acoustic wave, and when the vibration strikes the sensor, the sensor sends an electric signal to the computer.

The device is not a musical instrument in the way we normally think about instruments. But that’s part of the beauty of it, says Crevoisier. It allows a person to be creative. “It’s more like we are providing a means for people to design their own instruments,” he says. His system adds a layer of music to already existing sounds. On a regular drum, for example, a drum beat is just the sound of the drum. But on a drum outfitted with MUTE technology, a drum beat could be both the sound of the drum and a control for some other sound layered on top of that.

Crevoisier’s work on new musical instruments grew out of his participation in a project called TAI-CHI (pronounced ty-chee), which stands for “tangible acoustic interfaces for computer-human interactions.” An interface is a device, or a lot of devices working together, that allow people to communicate with machines. A tangible object is one that you can touch. The keyboard of a personal computer, for example, is a tangible interface between you and your computer. So is your mouse.

|

|

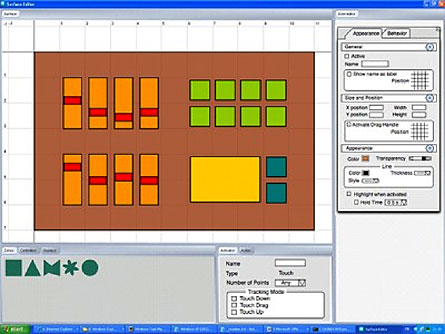

Sound waves are measured by height, called amplitude, and width, called frequency. At top, the sound waves have a lower frequency and, to our ears, a lower pitch. The bottom image shows a sound wave with a higher frequency, which we would hear as high-pitched notes.

|

| NOAA Ocean Explorer |

In the TAI-CHI project, Crevoisier and his colleagues showed that acoustics, or the science of sound, could be used to turn any surface into a musical instrument. They also showed that the technology could lead to a new kind of interface.

Here’s how: The sensors pinpoint the place on a surface where a person taps.

One way to do this requires at least three sensors on the surface. When a person taps the table, the sound waves travel to the sensors, and each sensor records the exact time when the waves reached it. By knowing where the sensors are located and what the surface is made of, a computer program can use the waves’ arrival times to figure out exactly where the surface had been tapped.

Another method to pinpoint a tap uses only one acoustic sensor, but it is more complicated. A user needs to fine-tune the device very carefully, and provide the computer with lots of information about the surface material itself.

Acoustic sensors could be used to build new kinds of computers that look nothing like traditional desktop models. Unlike a keyboard and mouse, which require a user to remain in front of a computer screen, acoustic sensors would allow a user to interact with the computer almost anywhere. You could use your fingers to draw a picture on a wall, for example, and record the drawing with your computer.

|

|

This device, built with TAI-CHI technology, is called the Sound Rose because when a person taps on the table, a colorful flower appears. A person’s taps are tracked using acoustic sensors, and the images are projected from the ceiling.

|

| Alain Crevoisier / Future Instruments |

Or, imagine a restaurant owner, who could glue menus to the top of his tables and install acoustic sensors underneath. Diners could then order simply by tapping on the menu. The vibration from the tap would be picked up by the sensors, which would be able to figure out where the tap came from. A computer could match that location to a dish on the menu and send the order to the kitchen.

In another example, perhaps someone in a wheelchair could mark a spot on the wall, a table top or the arm of a chair to serve as a switch. It might be for turning on or off a light, for turning up or down the volume on a television or even for sending out a distress alarm. A simple tap on the spot could trigger the sensors, which could relay the information to a computer. The technology would allow people to make any surface into an interface to control some action.

Crevoisier isn’t the only one looking at ways to use acoustic sensors in future devices. Another European company, for example, is finding ways to use them to make a “smart apartment,” where any surface — mirrors, tables, counters, walls — can be used to interact with the house computer, to do tasks like change the lighting, turn on the television or raise the temperature.

|

|

The Touch Wall, created by Microsoft Corporation, made its debut in May of this year.

|

| Microsoft |

Other researchers around the world are developing other kinds of new tangible interfaces, though not all of them use acoustic sensors. The computer company Microsoft has developed a device called TouchWall, for example, which converts almost any surface to a computer interface by using sophisticated laser trackers, cameras and a projector.

Look around again. The future of computing and of musical instruments may be all around you.

Going Deeper: